Graph Neural Network: the next step in deep learning

In the development of artificial intelligence, the learning process is crucial. Machine learning (and deep learning, in particular) is used to train algorithms and, therefore, to teach the software to think for itself. Facial recognition, for example, is based on this technology. The foundation for many machine learning approaches are artificial neural networks. The software’s algorithms are designed as a network made of nodes, just like the human nervous system. So-called graph neural networks are a fairly new approach. How does this technology work?

How do graph neural networks work?

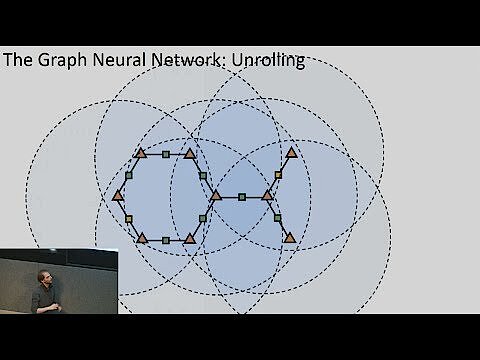

Graph neural networks (GNN) are a new subtype of artificial neural networks that are based on graphs. In order to understand GNNs we first need to know what is meant by ‘graph’ in this context. In IT, the term stands for a certain type of data. A graph consists of several points (nodes or vertices) that are connected with one another (via edges), forming pairs. To give a simple example: Person A and Person B can be represented as points on a graph. Their relationship to each other is then the connection. Were the connections to disappear, we’d be left with a mere collection of people or of data.

One popular subtype of a graph is a tree. Here, the nodes are connected in such a way that there is always just one path (even across several nodes) between Point A and Point B. The edges can either have a direction or no direction. In a graph, the connections are just as important as the data itself. Every edge and every node can be labeled with attributes.

A graph is, therefore, perfectly suited to represent real circumstances. And that is the challenge for deep learning: to make natural conditions understandable to software. A graph neural network makes that possible: In a GNN, nodes collect information from their neighbors as the nodes regularly exchange messages. The graph neural network learns in this way. Information is passed on and recorded in the properties of the respective node.

Want to find out more about graph neural networks and delve deeper into the subject? The Natural Language Processing Lab at Tsinghua University has published a comprehensive summary of scientific work on the topic of GNN on GitHub.

Where are graph neural networks used?

Up to now, scientists primarily dealt with the possibilities of graph neural networks. The potential areas of application suggested, though, are diverse. Where relationships play a major role in situations or processes that are to be represented via neural networks, it makes sense to use GNNs.

- Financial markets: Market forecasts can be made more reliable by understanding the transactions.

- Search engines: The connections between websites are critical in evaluating the sites’ importance.

- Social networks: Better understanding relationships between people can help optimize social media.

- Chemistry: The composition of molecules can be represented via graphs, and thus can be transferred to GNNs.

- Knowledge: Understanding the links between information is crucial to providing knowledge in the best way possible.

Graph neural networks are already being used in image and speech recognition. Unstructured, natural information can potentially be processed more effectively with a GNN than with traditional neural networks.

Advantages and disadvantages of graph neural networks

Graph neural networks help with challenges that traditional neural networks haven’t yet been able to adequately deal with. Data based on a graph couldn’t be processed correctly because the connections between the data weren’t weighted sufficiently. With GNNs, though, the so-called edges are just as important as the nodes themselves.

However, other problems that accompany neural networks can’t be solved with graph neural networks. The black box problem, in particular, remains unsolved. It’s difficult to understand how a (graph) neural network comes to its final conclusion because the complex algorithms’ internal processes are difficult to retrace from the outside.