What is AI speech recognition?

AI speech recognition converts spoken language into text in real time. It powers voice assistants, dictation tools and automated customer interactions.

What is AI speech recognition and how does automatic speech recognition (ASR) work?

AI speech recognition, also known as Automatic Speech Recognition (ASR), converts spoken language into machine-readable text. The system starts by analyzing the audio signal and extracting acoustic features such as frequency, pitch and volume. It then maps these features to phonemes, the smallest units of sound in a language.

ASR systems use statistical and AI models to predict words and sentence structure. These models are trained on large speech datasets to recognize patterns and understand context. As the system processes more data, accuracy improves and more reliable transcriptions are produced. The text is either output in real time or prepared for further AI processing. As a result, voice assistants and AI call bots can understand requests and respond immediately.

Modern AI speech recognition uses end-to-end architectures such as RNN-Transducers (RNN-T) or transformer-based models. These combine acoustic and language modeling in a single training process, which improves context awareness and reduces errors compared to traditional pipelines.

- Live in under 5 minutes

- Works with your existing number

- Sounds natural and professional

What technologies power AI speech recognition?

AI speech recognition combines several technologies that process and interpret speech and convert it into text.

Neural networks

Neural networks form the foundation of modern speech recognition. They consist of interconnected artificial neurons that learn to recognize patterns in audio data, such as recurring sound sequences and typical speech intonation. Training on large amounts of speech data allows them to distinguish between similar sounds such as “b” and “p” and to segment speech accurately.

Deep learning

Deep learning uses multilayer neural networks to model complex speech patterns. Speech varies widely depending on the speaker, accent, dialect and background noise. Because of this variability, traditional algorithms often fall short. Deep learning captures these variations, detects patterns in large datasets and processes unfamiliar speech more effectively.

Feature extraction

Before a neural network can analyze speech, it must extract relevant acoustic features from the raw audio signal. This step is called feature extraction. Typical acoustic features include:

- Formants: Resonance frequencies that are essential for recognizing vowels.

- Spectrograms: Visual representations of frequency over time.

- Mel-Frequency Cepstral Coefficients (MFCCs): Mathematical representations that capture the most important sound information for AI models.

These features reduce the amount of data and highlight speech-relevant information, allowing AI speech recognition systems to process audio more efficiently.

Language models

Large language models such as GPT refine ASR output by adding context to the acoustic analysis. They predict which words are likely to follow one another and which sentence structures make sense. This allows the system to interpret the meaning correctly, even when individual words are unclear or there is noise in the background. Language models play a key role in turning raw speech-to-text into semantically accurate results.

Natural Language Processing (NLP)

ASR converts speech into text. Natural Language Processing goes a step further by interpreting that text. NLP identifies intent, analyzes context and evaluates grammar and sentence structure. This allows voice assistants, call bots and transcription tools to process voice commands and extract meaning from transcribed speech. By combining ASR and NLP, AI speech recognition systems can not only recognize words but also understand the intent behind them.

Which factors affect the accuracy of AI speech recognition?

Several factors directly influence how accurately AI speech recognition converts speech into text. Even small differences in pronunciation, volume or background noise can influence the result.

Language and dialect

Each language has its own sound patterns, grammar and word order. That’s why ASR systems typically require dedicated models for each language. Languages also vary by region. Pronunciation changes, syllables may be dropped and vocabulary can differ. For example, “want to” may be pronounced as “wanna” in casual American English, which a standard model may misinterpret.

Accents

Accents change how sounds and syllables are pronounced. Systems trained only on standard pronunciation often struggle with variation. For example, a speaker from the southern United States may pronounce certain vowels differently, which can affect transcription if the model was not trained on similar speech patterns. High accuracy therefore depends on training data that reflects a wide range of accents.

Background noise

Background noise from traffic, nearby conversations and machinery sounds all distort the audio signal. Poor microphones and echo also reduce signal quality. ASR systems use noise suppression and filtering to compensate. However, transcription accuracy still drops in noisy environments. For example, an AI system in a call center has to process speech alongside the noise from typing and the air conditioning units.

Linguistic variability

Speech also varies in volume, speed and pitch. All of this can affect recognition. Softly-spoken or unclear speech may be harder to recognize than clear, steady speech. Emotions such as excitement or anger also affect speech patterns and may reduce accuracy.

Recording quality

Recording quality directly affects recognition accuracy. Microphone type, sampling rate and compression all influence the input signal. High-quality microphones produce clearer signals, while phone lines or basic headsets can introduce compression artifacts or background noise, which reduce speech recognition accuracy.

Where is AI speech recognition typically used?

AI speech recognition is widely used in business and everyday life. Tools like the IONOS AI Receptionist show how companies can use it to automate customer interactions and handle them more efficiently.

Dictation tools

Dictation tools convert speech directly into text. This speeds up writing notes, emails and reports, while improving accessibility. High-quality dictation tools reduce errors and capture even complex technical terms correctly. Many tools also support the writing process with real-time correction and autocomplete. They also adapt to individual speech patterns over time, which further improves accuracy.

Transcription

Transcription tools convert audio and video into text. This is useful for conferences, podcasts and documentation purposes. ASR analyzes recordings, separates speakers and creates searchable transcripts. Advanced tools also detect pauses, filler words and sentence structure. This helps companies create documentation faster, improve archiving and reduce manual work.

Voice assistants

Voice assistants such as Siri, Alexa and Google Assistant respond to spoken commands in real time. They perform a variety of tasks, like controlling smart home devices, helping with scheduling and answering questions. Voice assistants combine AI speech recognition with NLP to understand meaning and context. Here real-time speech recognition keeps interactions smooth and natural.

AI phone assistants

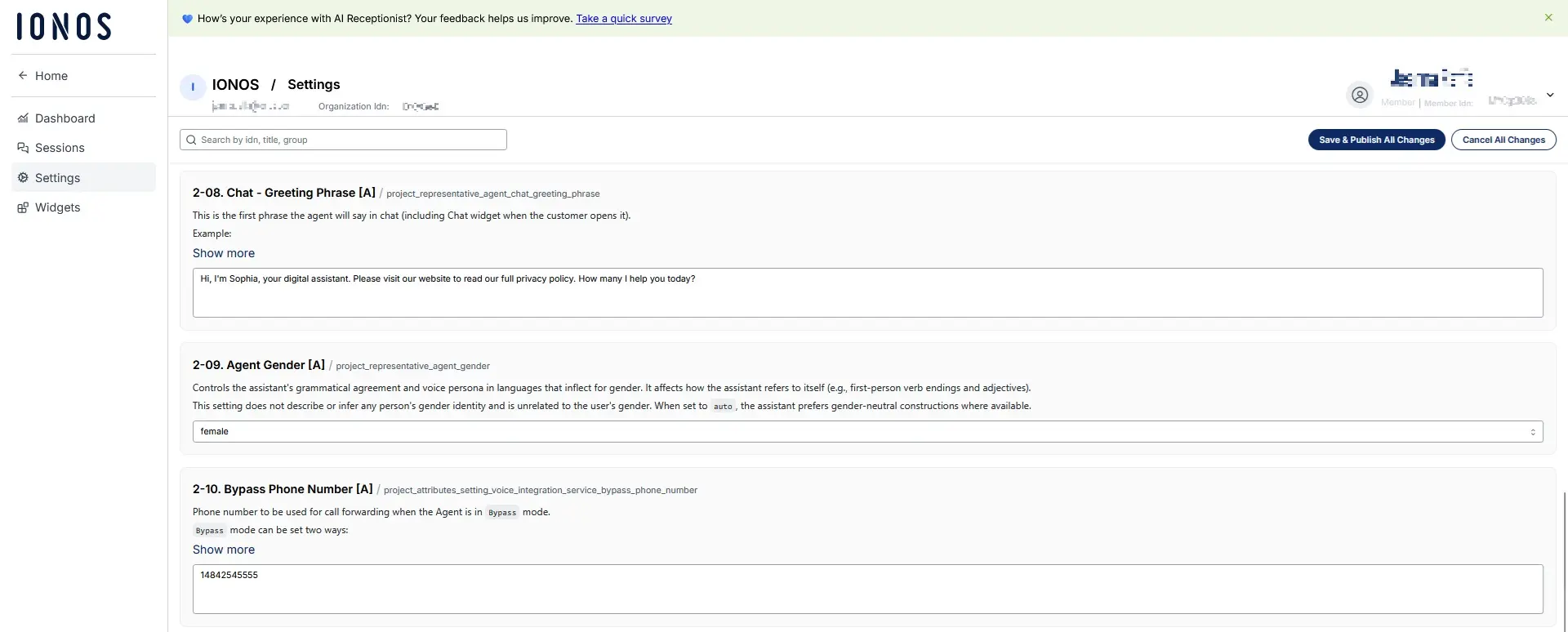

AI-based phone assistants use AI speech recognition to understand and handle customer requests automatically. The IONOS AI Receptionist is one example. It understands customer inquiries over the phone, transcribes them in real time and responds appropriately to each situation. This allows companies to reduce waiting times, while also improving customer experience and taking the pressure off support staff.

The IONOS AI Receptionist integrates with existing phone systems, so it’s ready to use right away. It can also be customized for specific needs, showing how AI speech recognition delivers real value in everyday business use.

- Live in under 5 minutes

- Works with your existing number

- Sounds natural and professional

Which AI speech recognition tools and APIs are available?

Several leading tools and APIs support AI speech recognition:

- Google Speech-to-Text API

- Microsoft Azure Speech

- Amazon Transcribe

- OpenAI Whisper

These tools vary in language support, accuracy, real-time capabilities and pricing. Google offers broad language coverage and strong cloud integration. Microsoft focuses on enterprise use and security. Amazon Transcribe provides scalable streaming for call centers. Whisper offers strong multilingual support and performs well in noisy conditions. Most providers offer APIs that integrate easily into existing applications. Companies should choose a tool or API based on the language support, real-time capabilities and level of data protection they require.

What are the challenges and limitations of AI speech recognition?

AI speech recognition works well, but is not perfect. Homophones, unfamiliar accents and unclear pronunciation can lead to errors. Background noise and technical issues can also reduce accuracy. Technical terms and proper names are not always recognized correctly either. ASR systems become more accurate when trained on larger and more diverse datasets. Noise-reduction algorithms also help improve audio quality. Custom language models can be adapted to specific industries or company terminology. Feedback loops, where corrections are fed back into the model, further improve accuracy over time. Combining ASR with NLP is key to reducing cases where the meaning is interpreted incorrectly.

How does AI speech recognition fit in with data protection and GDPR?

AI speech recognition processes sensitive personal data such as voice recordings, conversation content and contact details. This makes strong data protection measures essential. Companies must clearly explain what data they collect, how they use it and how long they will store it for. Audio and text data should always be stored in encrypted form to prevent unauthorized access. Where possible, data should also be anonymized or pseudonymized to fully protect user identity. Users must give explicit consent before voice recordings are processed and be informed about their right to access or delete their data. For cloud-based services, companies should also check where data is stored and which security standards and certifications apply.

The IONOS AI Receptionist meets all these requirements. It processes calls fully in line with the GDPR and runs exclusively on secure servers in the EU. The IONOS AI Receptionist combines automated AI speech recognition with the highest data protection standards. This helps customers feel confident about how their data is handled and reduces legal risk for companies.

Since August 1, 2024, the EU AI Act has been in force. The act provides a legal framework for regulating AI systems based on risk. Requirements for transparency, governance and documentation vary depending on the level of risk involved. While this law applies within the EU, it can also affect US companies if they offer AI services in the European market or process data of EU users.