What is retrieval-augmented generation (RAG)?

Retrieval-augmented generation (RAG) is a technology that improves generative language models by accessing relevant information from external and internal data sources to deliver more precise and contextually appropriate responses. In this article, we introduce the concept of RAG and explain how to effectively utilize it in your business.

- Get online faster with AI tools

- Fast-track growth with AI marketing

- Save time, maximize results

What is retrieval-augmented generation used for?

Retrieval-augmented generation (RAG) is a technology designed to enhance the output of a large language model (LLM). RAG operates in the following way: When a user submits a query, the system initially searches through a vast amount of external data to locate relevant information. This data can come from an internal database, the internet or other information sources. Once the relevant data is identified, the system employs advanced algorithms to create a clear and accurate response based on this information.

Large language models (LLMs) play a crucial role in the development of artificial intelligence (AI), especially for intelligent chatbots that employ natural language processing applications. The main objective of these models is to develop bots capable of accurately responding to user questions across various contexts by accessing reliable sources of knowledge.

Despite their high performance, LLMs can prove quite challenging. For example, they may give wrong answers if there is no suitable information for a response. Furthermore, since they are trained on extensive text data from the internet and other sources, they frequently incorporate biases and stereotypes present in that data. The training data is collected at a specific point in time, resulting in their knowledge being confined to that period and not automatically updated. Consequently, this can result in users being provided with outdated information.

By integrating retrieval-augmented generation (RAG) with large language models (LLMs), these limitations can be overcome. RAG enhances the abilities of LLMs by locating and processing up-to-date and relevant information, leading to more accurate and dependable responses.

How does RAG work?

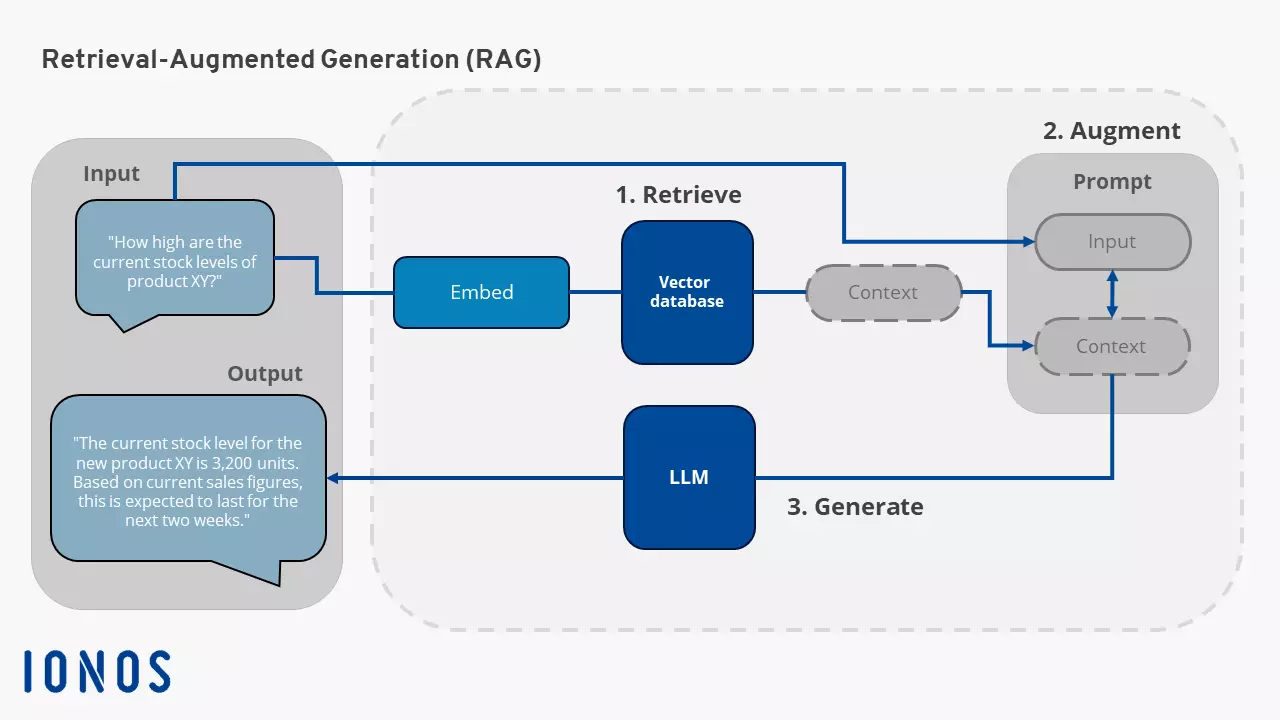

Retrieval-augmented generation is comprised of several steps. Here is an explanation of the steps RAG takes to generate answers that are more relevant and precise:

Preparing the knowledge base

First, an extensive compilation of texts, datasets, documents or other informational sources needs to be provided. This collection, in addition to the existing LLM training dataset, acts as a knowledge base for the RAG model to access and retrieve relevant information. These data sources can originate from databases, document repositories or other external sources.

How effective a RAG system is depends heavily on the quality and availability of the data it accesses. Incomplete or incorrect data can impair the results.

Embedding in vector databases

An important aspect of RAG is the use of embeddings. Embeddings are numerical representations of information that allow machine language models to find similar objects. For example, a model that uses embeddings can find a similar photo or document based on their semantic meaning. These embeddings are stored, for example, in vector databases, which can be searched and understood efficiently and quickly by an AI model. To ensure that the information is always up-to-date, it is important to update the documents regularly and adapt the vector representations accordingly.

Retrieving relevant information

When a user request is made, it is first converted into a vector representation and compared with the existing vector databases. The vector database searches for the vectors that are most similar to the request.

Augmenting the input prompt

The retrieved information is inserted into the context of the original prompt using engineering techniques to expand the prompt. This includes both the original question and the relevant data. This allows the LLM to generate a more precise and informative response.

Prompt engineering techniques are methods and strategies for designing and optimizing prompts for large language models (LLMs). These techniques involve carefully formulating and structuring prompts to achieve the desired responses and reactions from the model.

Generating an answer

Once the RAG model has found the relevant information, the response is generated. The model takes the information found and uses it to generate a response in natural language. It uses natural language processing techniques, such as GPT-3, to “translate” the data into our language.

GPTs (Generative Pre-trained Transformers) use the Transformer architecture and are trained to understand and generate human language. The model is trained in advance on a large amount of text data (pre-training) and then adapted for specific tasks (fine-tuning).

What are the advantages of RAG?

Implementing retrieval-augmented generation offers your company numerous advantages, including:

Increased efficiency

Time is money—especially for companies with limited resources. RAG is more efficient than large generative models because it selects only the most relevant data in the first phase, reducing the amount of information that needs to be processed in the generation phase.

Cost savings

Implementing RAG can lead to considerable cost savings. By automating routine tasks and reducing manual searches, staffing costs can be reduced while improving the quality of results. The implementation costs for RAG are also lower than those for the frequent retraining of LLMs.

Up-to-date information

RAG makes it possible to always provide the newest information by connecting the LLM with live feeds from social media, news sites and other regularly updated sources. This ensures that you always receive the latest and most relevant information.

Faster response to market changes

Companies that can react more quickly and precisely to market changes and customer needs have a better chance of holding their own against the competition. Quick access to relevant information and proactive customer care can set companies apart.

Development and testing options

By managing and modifying the LLM’s information sources, you can adapt the system to evolving requirements or cross-functional applications. Furthermore, access to sensitive information can be restricted to different authorization levels, ensuring that the LLM provides suitable responses. If incorrect answers are generated, RAG can be employed to rectify errors and make corrections in instances where the LLM relies on inaccurate sources.

What are different use cases for retrieval-augmented generation?

RAG can be used in numerous business areas to optimize processes:

- Improving customer service: In customer service, responding to customer queries quickly and accurately is crucial. RAG can help by retrieving relevant information from an extensive knowledge base, enabling immediate responses to customer queries in live chats without long waiting times. This relieves the support team and increases customer satisfaction.

- Knowledge management: RAG supports knowledge management by enabling employees to quickly access relevant information without having to search through several folders.

- Onboarding of new employees: New employees can get up to speed faster because they can access all the information they need more easily. Whether it’s technical manuals, training documents or internal guidelines, RAG makes it easy to find and use the information they need.

- Content creation: RAG can assist companies in producing blog posts, articles, product descriptions and other types of content by leveraging its ability to retrieve information from trustworthy sources (internal as well as external) and generate texts.

- Market research: RAG can be used in market research to quickly and accurately retrieve relevant market data and trends. This facilitates the analysis and understanding of market movements and customer behavior.

- Production: In production, RAG can be used for consumption forecasting and automated workforce scheduling based on past experience. This helps to use resources more efficiently and optimize production planning.

- Product sales: RAG can increase sales productivity by helping sales staff to quickly retrieve relevant product information and make targeted recommendations to customers. This improves sales efficiency and can lead to higher customer satisfaction and increased sales.

- One platform for the most powerful AI models

- Fair and transparent token-based pricing

- No vendor lock-in with open source

Tips for implementing retrieval-augmented generation

Now that you’ve learned about the numerous advantages and areas of application of retrieval-augmented generation (RAG), the question remains: How can you implement this technology in your company? The first step is to analyze your company’s specific needs. Think about the areas where RAG could make the biggest difference. This could be customer service, knowledge management or marketing. Define clear goals that you want to achieve by implementing RAG, e.g. reducing response times in customer service.

There are various providers and platforms that offer RAG technologies. Research them thoroughly and choose a solution that best suits your company’s needs. Pay attention to factors such as user-friendliness, integration capability with existing systems, scalability and, of course, cost.

Once you have chosen an appropriate RAG solution, it’s essential to integrate it into your existing systems and workflows. This might involve connecting it to your databases, CRM systems or other software solutions. Ensuring a seamless integration is vital to fully benefit from RAG technology and avoid any operational disruptions. To facilitate a smooth transition, make sure to provide training and support. A well-trained team can more effectively utilize the benefits of RAG and address any potential issues swiftly.

After implementation, it’s crucial to consistently monitor the performance of the RAG solution. Regularly review the results and identify areas for improvement. Make sure that all data processed by retrieval-augmented generation technology is handled securely and in compliance with relevant data protection regulations. This approach not only safeguards your customers and business but also increases trust in your digital transformation efforts.